Everything Everywhere All at Once‘s Daniel Kwan is still working on his next narrative thrill ride, but is first helping spotlight both the dangers and promises of artificial intelligence with The AI Doc: Or How I Became an Apocaloptimist.

Kwan, half of the creative team known as the Daniels, is producing the documentary co-directed by Daniel Roher, the Oscar-winning mind behind 2022’s Navalny, and Charlie Tyrell, whose short documentary My Dead Dad’s Porno Tapes was put on the 2019 Oscars shortlist. The film also puts Roher in the driver’s seat, exploring his perspective as a soon-to-be father who finds himself questioning the existential threats and potential promises of artificial intelligence.

Roher and Tyrell subsequently turned to interviewing some of the world’s leading experts in AI, in the hopes of better understanding the world his child will be raised in. Having made its world premiere at the 2026 Sundance Film Festival, and having been picked up by Focus Features for a March 27 release previously, The AI Doc has largely garnered positive reviews from critics, currently holding an 80% approval rating on Rotten Tomatoes.

In honor of its SXSW showing, ScreenRant‘s Ash Crossan interviewed Daniel Kwan, Charlie Tyrell, Ted Tremper, Shane Borris, Tristan Harris and Diane Becker to discuss The AI Doc: Or How I Became An Apocaloptimist. When asked about the group efforts to put together the film’s chronicling of artificial intelligence, Becker began by acknowledging “it takes a lot of brains to talk about AI,” describing it as a “Sisyphean task” given how much information there is to cover:

Diane Becker: I think the producing team tackled it from everywhere — from the essay, the writing, the just breaking down the story, rebreaking the story, with the entire team, with Charlie and Daniel. There are a lot of logistical things to deal with.

Tremper, whose prior experience includes producing multiple episodes of The Daily Show, went on to share that The AI Doc‘s creative team “interviewed over 40 people on camera,” while also doing “over 100 background interviews with confidential sources.” This all resulted in them having “over 3,300 pages of transcripts” to parse through to find their narrative, which also “isn’t including Daniel [Roher]’s family archive,” adding up to “hundreds of hours” of material going back to the 1940s:

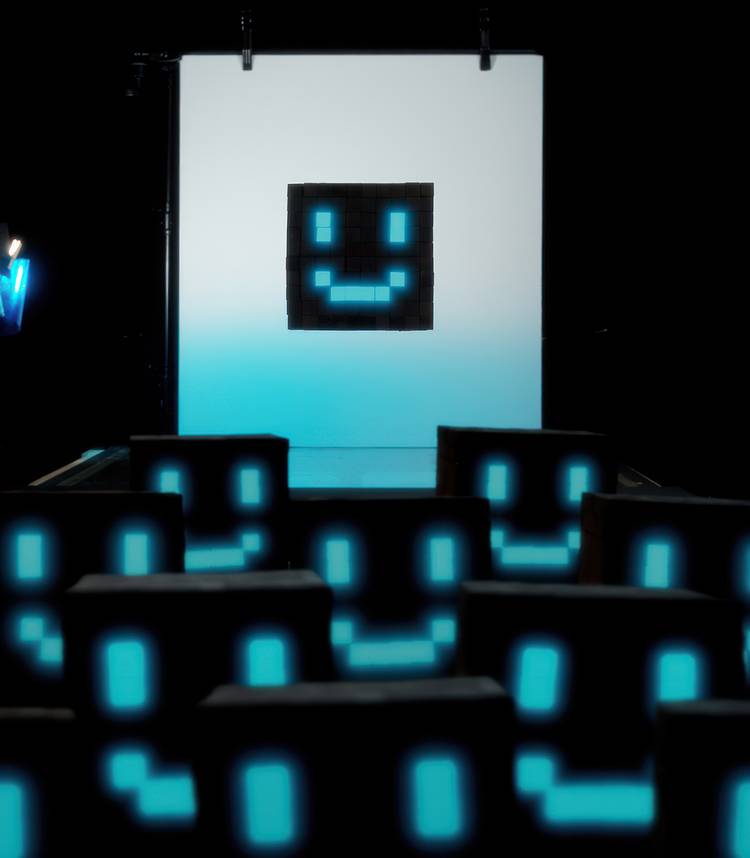

Ted Tremper: Yeah, and drawings, and decades of journals. And so that sort of tactile, handmade quality of the film is so important, because we wanted it to be sort of the opposite of what one would expect an AI doc to be about. It’s not very technical, honestly, like it’s very, very human. It’s very, very handmade. So synthesizing all of those things, it took five producers, it took two directors, it took two editors, it took a story producer, it took an amazing co-producer, an associate producer. It was just full on, for the eight months that became two and a half years.

The AI Doc Team Don’t Want Audiences To Be Overwhelmed By Artificial Intelligence

ScreenRant: So tell me about why you wanted to tackle the subject of AI.

Charlie Tyrell: It wasn’t something I sought out. It’s something that found me. I’m colleagues and friends with Daniel Roher. We’re both Toronto guys, and have wanted to do something together for a while. And then Daniel Kwan reached out to Daniel, in the afterburn of his Navalny awards campaign, and Oscar and whatnot. So, he was pretty busy. And Dan Kwan said, “I’m interested in making this AI doc, are you interested too?” And Roher said, “Yeah, but you need a co-director,” so I got joined into this whole thing.

ScreenRant: You two have been sounding the alarm bells for quite some time. So what do you hope that this doc in particular kind of … What did you want to share with the audience?

Tristan Harris: Yeah. I mean, we were really inspired by the history of the film, The Day After. That was a film in 1982, I think, about what would happen if there was a full nuclear war. And people often analogize that AI is like nuclear weapons, except it’s also nuclear weapons that can give you cancer drugs, boost GDP, boost scientific advantage. So it’s a very confusing topic. And I think what Kwan just said, the whole point is: if the world can have clarity, around something that feels overwhelming, confusing, complex, if we have clarity, and there’s common knowledge with that clarity, I know that you know, that I know, and you know that I know, that we know, that can lead to actual action. And when we’re in this kind of splintered reality, ironically, because the first contact with AI, which is social media, then we can’t have that.

Daniel Kwan: I think another thing we want everyone to understand is the concept of ‘The Resource First,’ which applies to countries very rich in resources, like Venezuela. Something awkward happens when a country’s GDP becomes dependent on a resource that isn’t people. The government says, “Well, I don’t get any extra power, money, or economic return by investing in my people versus investing in infrastructure for oil.” So they invest everything in infrastructure for oil. We’re about to hit the intelligence curse: as the GDP of countries becomes dependent on AI, putting money into data centers that grant economic, scientific, and military dominance, what is the incentive for countries? Is it to invest in people or is it to invest in AI? In fact, recently, Sam Altman said, when he was asked about how much energy and water does AI take, and he says, “Well, how much energy and water resources does it take to grow a human intelligence?” You can already feel the ‘who deserves it more’ questions starting to arise. There is a moment we have before corporations no longer need us for labor and governments no longer need us for taxes, or while our voice still has a say, but that’s a short window. And so, the point of this film is to give everyone the clarity, that the default path is actually an anti-human future. And then it almost has to look like a charity, and if we don’t want that anti-human future, we need to do something about it, and that’s what I think this is trying to say.

ScreenRant: I mean, it’s been three years since Everything, Everywhere, All At Once — my favorite, just an Oscar darling. But why was this something you wanted to be involved in? And as a filmmaker, what protections do you feel like need to be in place for filmmakers?

Daniel Kwan: I mean, the short of it is, I’m very cognizant of how technology affects society, and especially how technology affects my ability, as a storyteller, to create in the world, and to reach audiences. And so, I’ve been following the tech world for a while, and that’s how I met Aza and Tristan, and when they came to me three years ago, they said the world’s not ready for AI. They don’t know what’s coming down the pike, and we have to create something that can make sense of it for everyone, ’cause it’s going to be very confusing. It’s going to be very overwhelming. And if we don’t have clarity, together, as a community, as a country, as a global human community, we won’t be able to move towards action. And so I think that’s the most important thing. We can’t allow ourselves to get stuck in gridlock, get stuck in infighting, get stuck in sort of, this messy, chaotic space right now, that we’re stuck in. We have to lift ourselves up out of that, find clarity and move towards action. And these two thought a movie would be a good first step. And so that’s the short answer for that. It’s not that short. And as far as protections for filmmakers, it goes much further than just productions for filmmakers, because it’s really just about the creative spirit. And we have to have questions beyond sort of, protections and regulations, and move into more of the spiritual, and the very human questions of like, “What are we actually doing on this planet, and why do we have to create? Why are we storytellers?” Because if we can’t answer those questions, we won’t be able to know what to do with AI.

ScreenRant: I mean, I’ve seen the film, and for me, it was like, that was one of the most — the best things that is like, it was very accessible for me, for something that to me is just like, “Oh, whoa, I don’t know. I don’t know what this is.” What is an apocaloptimist?

Ted Tremper: So an apocaloptimist, in terms of its actual definition to me, is someone who believes, and this is sort of — a subject in the film, for people who’ve not seen it, describes themselves as being an apocaloptimist. And you see the moment in the film when Daniel sees this, and really sort of identifies with this. And my definition is a person who believes that we can get past the catastrophic potential futures that we have, if we are able to unite, and sort of act in a coordinated fashion. So, basically, if we can coordinate together as a species, we can get past the clear, and unclear, catastrophic outcomes that we’re facing.

ScreenRant: Is there anything I have not mentioned that you feel is important, that you want audiences to know before they see the film?

Shane Boris: There’s another meaningful moment in the film, when one of the subjects says that, “Intelligence is our capacity to answer questions, but wisdom is our ability to ask the right questions.” And so what I think we hope in this film, is that it sets that level, it sets that ground, where we can ask questions of ourselves like, “Who do we want to be and what kind of world do we want to live in, and feel and believe,” that we have the capacity to move towards that. And that feels like a really critical part of what we tried to create.

Tristan Harris: I think what I want people to know about the film, is that by watching it, it gives you your own tools to be able to predict the future, because it’s very confusing. Which way is AI going to go? It’s giving us simultaneous utopia and dystopia. And the first act, the first part of the film, sort of tells you what AI is, how it works, and what are its consequences, like what could go wrong? The second part, sort of says, well, what could go right? It sort of tells you what the possibility is of technology. And the third act tells you how to discover what is the probable of technology. What way will it likely go? And social media, sort of like, the thing that we have as our background, we were able to predict in 2013, each way social media would go. Will we give it all the most beautiful parts, or will it actually create the most narcissistic, backsliding, anxious generation? And of course, we live in that one. And so the film teaches you how to discover the probable outcome of AI, which ends us in this much more like antithetical future. Not so that you end in depression, but so that you end in clarity, giving us all the agency to start putting our hand on the steering wheel.

ScreenRant: Charlie, what did you learn about yourself as a filmmaker making this, and is there anything that you now want to do post-The AI Doc?

Charlie Tyrell: This was a project that we dove into feet first, where there wasn’t really a plan on what we were even making when we first started. We were looking at doing it with narrative elements, as well. I think the first conversation was like a 45-minute piece, and then it quickly expanded. It was like a day before things expanded out, and it was supposed to be an eight-month timeline to get it out before the election, to just go, go, go, and when we started untangling the subjects, and we started kind of feeling through the dark, collectively, to figure out what this film needed to be, and what we need to say with it, that just kind of threw the timeline out the window. We just really had to just keep hammering, collectively as a group, to figure out what this film was. It wasn’t made in a very organized way, and I don’t mean that it was made in a messy way. Choosing what we had to say was the challenge. The story was the disorganized part, because it’s such a broad topic, and it’s a topic that there are levels of depth that you can go to tell this story, that are probably going to alienate certain audience members, because it gets too hard to comprehend. So, we had to find a way to make this topic accessible. We keep referring to it as a first date for people, to ask the questions that the general public probably wants to ask. Daniel, as a subject in the film, is that proxy for the audience. But what I learned about myself as a filmmaker, is I’m not a very egotistical guy. Generally, I like collaborating with people, but this was a big collaboration. This was a big team, and a lot of elements and how to give yourself over to working as one cohesive unit, and one team, rather than really kind of sticking to your traditional practices and processes as a filmmaker. It was just really a way to just be open, because we all need to be open together to figure out AI. And the film was like a little, kind of Petri dish of the actual AI issue.

Be sure to dive into some of ScreenRant‘s other SXSW coverage with:

The AI Doc: Or How I Became an Apocaloptimist hits theaters on March 27!

- Release Date

-

March 27, 2026

- Runtime

-

104 Minutes

- Director

-

Josef Beeby, Charlie Tyrell, Daniel Roher